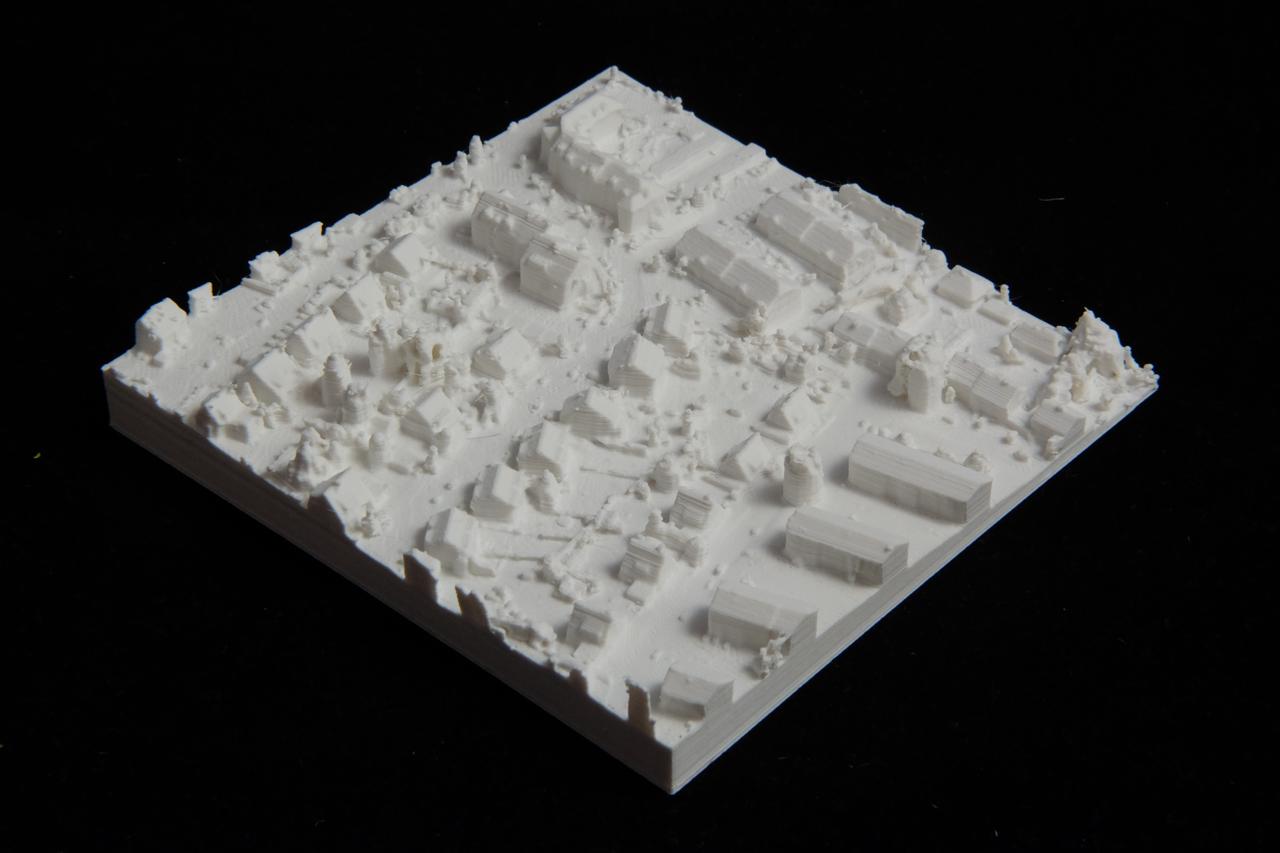

Generating an infinite world with the Wave Function Collapse algorithm

This article describes how I generate an infinite city using the Wave Function Collapse algorithm in a way that is fast, deterministic, parallelizable and reliable. It's a follow-up to my 2019 article on adapting the WFC algorithm to generate an infinite world. The new approach presented in this article removes the limitations of my original implementation.